An AI agent will never pause and ask whether it should be looking at sensitive data. It simply acts — and without governance context, acting fast means failing fast.

For many organizations, agents can take existing flows and increase speed, efficiency, and sometimes even the quality of the output itself. However, there is a caveat to this: “sometimes.” This principle holds true across every AI-touched workflow: the quality of output is only as trustworthy as the context feeding it. Observability, data quality, data lineage and more all depend on the idea that the agent knows where it is and what it’s talking about. So, we introduce context to provide a path. Without a context layer, outputs become faulty. And by nature of agents, faulty outputs don’t just persist; they compound. One response is “well the human in the loop should be able to identify those issues and correct them.” But doesn’t it make more sense to address the root issue instead of rejecting efficiency because it takes work? And depending on the field, the results can be catastrophic.

For analytics, you get a report that is slightly off. For data quality, you have a few NULLs in your table. But in the field of governance and classification, you get misconfigured security posture. You get a PII tag that covers a random account ID instead of a Social Security number. Then you have issues with security. Issues with a direct customer impact.

Agents can generate the most incredible dashboard you’ve ever seen. But when it comes to changes with an enterprise-wide impact, a security impact, the margin of error collapses. If you’re not confident an agent knows your security posture, confidence in your security posture is exactly what you’ve lost. Whereas an inflated dashboard may affect one small section of analytics, a misconfigured governance tag can affect data, infosec, and analytics teams. And in the event of a breach because of a misconfiguration, the end customer and shareholders are affected as well.

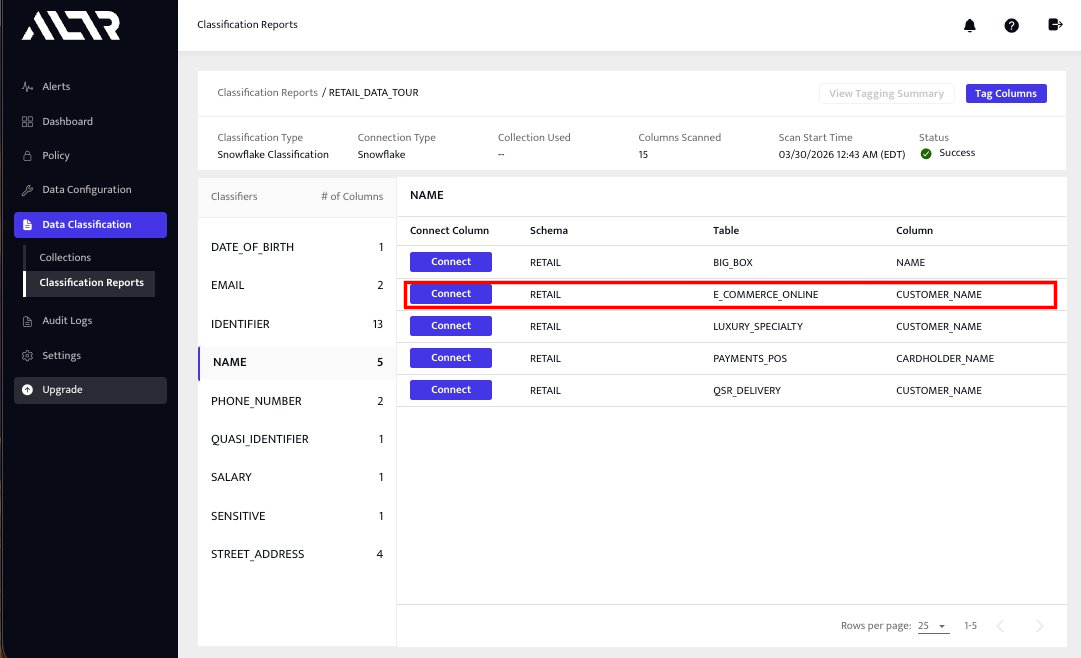

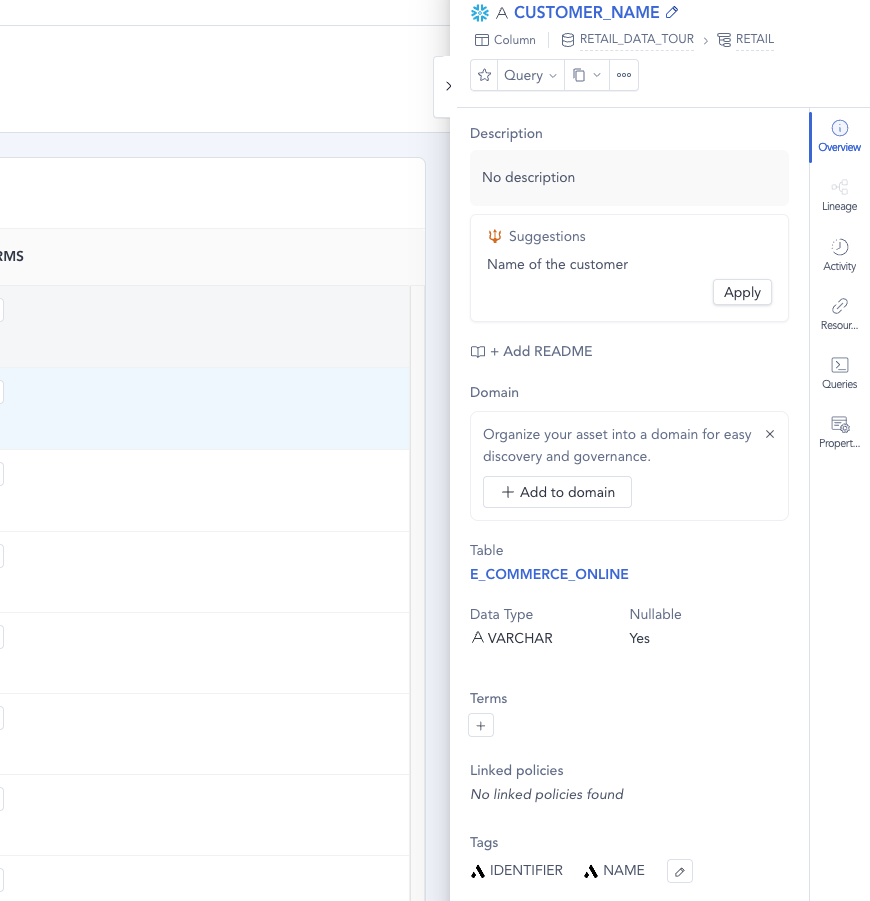

Via the ALTR Classification Workflow, Governance tags are automatically created from ALTR’s Classification Report. Accompanying Snowflake assets in Atlan are tagged with the proper Governance tag.

ALTR’s Classification integration with Atlan provides agents with the trusted governance context. By utilizing ALTR’s classification engine within Atlan’s Enterprise Context Layer, you can be assured that your governance tags are created from a trusted source of truth. Whether using ALTR’s own native classification or other ALTR built-in providers such as Snowflake’s in-house engine or Google’s DLP, you can be sure that every tag is created with the context of a real classification scan of your data.

Imagine this. In a massive data ecosystem, your data team wants to implement governance tags in Atlan. Because of the number of columns and tables, they want to use an agentic workflow. Their options here are limited. They can attempt to have the agent create tags just based on what’s in Atlan. View column names, look at what it thinks is sensitive, and decide based on that limited context. A likely result is either too granular or too broad. The tags don’t hit that sweet spot where policy and other downstream actions can begin. Or, you could have the agent run the ALTR classification workflow, sourced from a scan already created, verified, and run by the security team. A scan that reviewed an anonymized, legitimate sample of the data. The agent doesn’t need to decide anything. It only needs to trigger the workflow and verify its success.

Agents, in any field, are most optimized when they make the fewest decisions possible. As the famous quote from an IBM internal employee document says:

“A computer can never be held accountable. Therefore, a computer must never make a management decision.”

Atlan’s design as the Enterprise Context Layer reflects this exact principle. Given proper structure, guardrails, and context, agents aren’t making decisions. They never should. They are simply making a process faster using the tools provided to them. It’s not about the agent; it’s about the tools and context. This rings truer for security-related flows. Those “decisions” your agent is making could be the difference between a strong security posture and exposed sensitive data.

With proper context from a trusted tool like ALTR flowing through Atlan’s Enterprise Context Layer, enterprises can optimize these workflows without increasing risk of non-determinism. Trusted source, trusted results. It’s that simple.

See more information about ALTR’s integration and others at Atlan Activate on April 29th.